BioMedLM:

https://huggingface.co/stanford-crfm/BioMedLM

Mixtral_BioMedical:

https://huggingface.co/LeroyDyer/Mixtral_BioMedical_7b

Med-PaLM:

https://sites.research.google/med-palm/

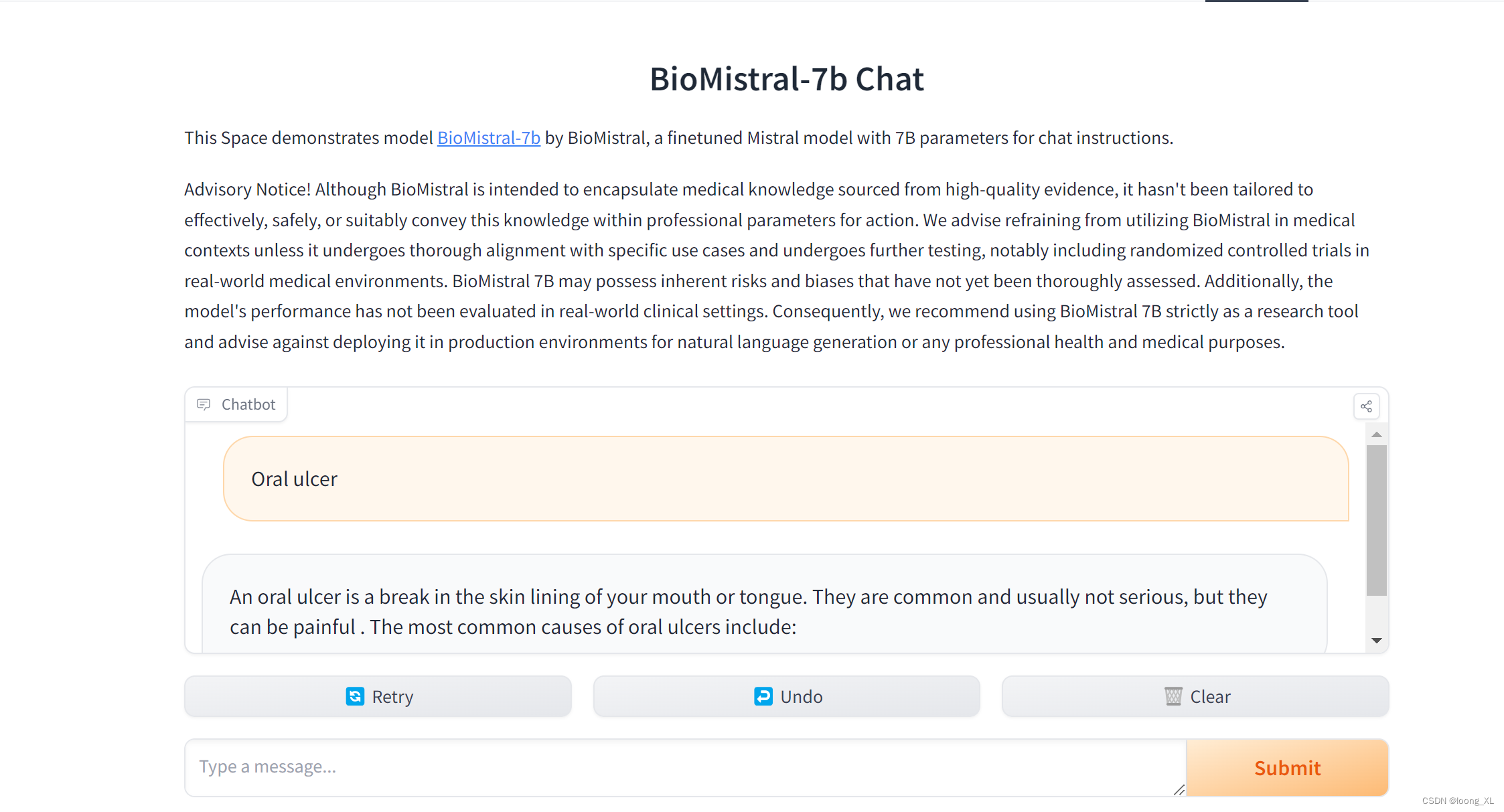

BioMistral:

https://huggingface.co/BioMistral/BioMistral-7B

BioMedGPT:

https://github.com/PharMolix/OpenBioMed/blob/main/README-CN.md

主要就是将生物医药相关知识,文本、图像等多模态数据进行构建的行业大模型进行知识问答

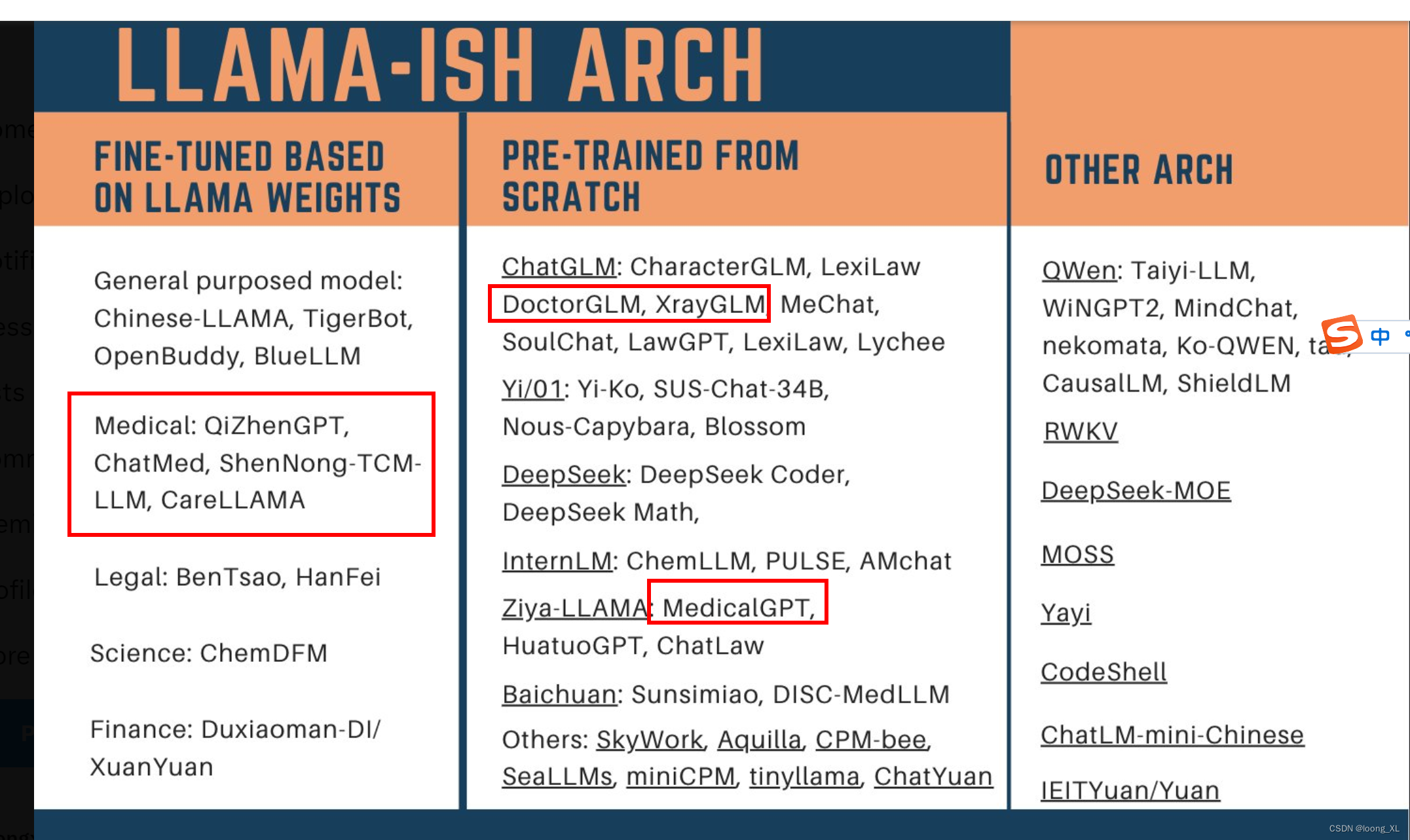

中文领域相关模型:

简单在线网页体验

https://huggingface.co/spaces/Artples/BioMistral-7b-Chat

代码运行

import torch

from transformers import AutoTokenizer, AutoModelForCausalLM, BitsAndBytesConfig

model_id = "BioMistral/BioMistral-7B"

bnb_config = BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_use_double_quant=True,

bnb_4bit_quant_type="nf4",

bnb_4bit_compute_dtype=torch.bfloat16

)

model = AutoModelForCausalLM.from_pretrained(

model_id,

quantization_config=bnb_config,

device_map="auto",

trust_remote_code=True,

)

eval_tokenizer = AutoTokenizer.from_pretrained(model_id, add_bos_token=True, trust_remote_code=True)

eval_prompt = "The best way to "

model_input = eval_tokenizer(eval_prompt, return_tensors="pt").to("cuda")

model.eval()

with torch.no_grad():

print(eval_tokenizer.decode(model.generate(**model_input, max_new_tokens=100, repetition_penalty=1.15)[0], skip_special_tokens=True))