目录

第1关:Resnet

任务描述

通过本关卡,你将掌握ResNet网络结构,并使用pytorch进行网络结构搭建。

- 相关知识

ResNet是2015年由He-Kaiming等人提出的,是深度学习任务中的经典且有效的网络,虽然最开始在分类任务中被使用,但后续在各个任务中被广泛验证。ResNet着眼于VGG网络等深度网络在深度达到一定程度后再增加层数,分类的性能就会下降,基于此,ResNet提出残差结构来解决这个问题。

ResNet

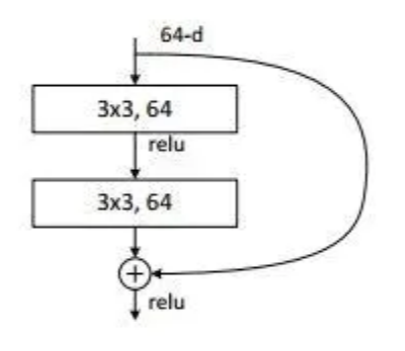

残差是指实际观察值与估计值之间的差,某个残差块的输入为x,拟合的输出为H(x),如果我们直接把输入x 直接传到输出作为观测结果,那么我们需要学习的残差就是F ( x ) = H ( x ) − x。下图是一个残差学习单元:

图 4-1-1 残差结构

图 4-1-1 残差结构

通过上图我们可以看出,残差学习是致力于使用多个有参网络层来学习输入、输出之间的残差F(x),有别于之前网络通过x学习网络的输出H(x),残差学习可以有效保留x的特性,这有利于消除深度网络中出现的梯度消失,保留更多上一层网络学习到的信息,从而解决网络不能加深的问题。

为了实际计算的考虑,ResNet使用了了一种bottleneck的结构块来代替常规的Resedual block,它像Inception网络那样通过使用1x1 conv来巧妙地缩减或扩张feature map维度从而使得我们的3x3 conv的filters数目不受外界即上一层输入的影响,自然它的输出也不会影响到下一层module。它的网络结构如下图:

图4-1-2 Bottleneck的网络结构

图4-1-2 Bottleneck的网络结构

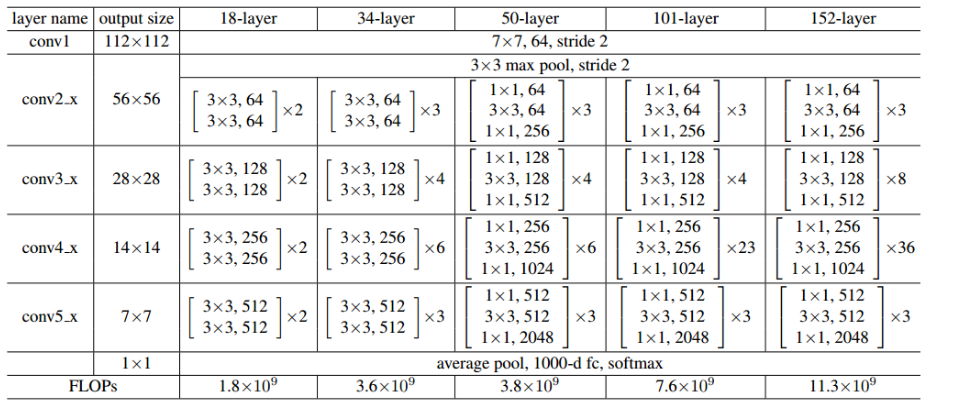

ResNet就是使用这样的Bottleneck层层堆叠形成了最后的网络,根据层数的多少有ResNet18,ResNet34,ResNet50等结构。具体的网络结构参数如下图:

图4-1-3 ResNet网络结构

图4-1-3 ResNet网络结构

如上图,每一个括号内为一个Bottlencek,后面相乘的数量为该模块重复的次数。

Pytorch搭建Resnet

ResNet 网络实现代码如下:

- import torch

- import torch.nn as nn

- import torch.nn.functional as F

- # 用于ResNet18和34的残差块,用的是2个3x3的卷积

- class BasicBlock(nn.Module):

- expansion = 1

- def __init__(self, in_planes, planes, stride=1):

- super(BasicBlock, self).__init__()

- self.conv1 = nn.Conv2d(in_planes, planes, kernel_size=3,

- stride=stride, padding=1, bias=False)

- self.bn1 = nn.BatchNorm2d(planes)

- self.conv2 = nn.Conv2d(planes, planes, kernel_size=3,

- stride=1, padding=1, bias=False)

- self.bn2 = nn.BatchNorm2d(planes)

- self.shortcut = nn.Sequential()

- # 经过处理后的x要与x的维度相同(尺寸和深度)

- # 如果不相同,需要添加卷积+BN来变换为同一维度

- if stride != 1 or in_planes != self.expansion*planes:

- self.shortcut = nn.Sequential(

- nn.Conv2d(in_planes, self.expansion*planes,

- kernel_size=1, stride=stride, bias=False),

- nn.BatchNorm2d(self.expansion*planes)

- )

- def forward(self, x):

- out = F.relu(self.bn1(self.conv1(x)))

- out = self.bn2(self.conv2(out))

- out += self.shortcut(x)

- out = F.relu(out)

- return out

- # 用于ResNet50,101和152的残差块,用的是1x1+3x3+1x1的卷积

- class Bottleneck(nn.Module):

- # 前面1x1和3x3卷积的filter个数相等,最后1x1卷积是其expansion倍

- expansion = 4

- def __init__(self, in_planes, planes, stride=1):

- super(Bottleneck, self).__init__()

- self.conv1 = nn.Conv2d(in_planes, planes, kernel_size=1, bias=False)

- self.bn1 = nn.BatchNorm2d(planes)

- self.conv2 = nn.Conv2d(planes, planes, kernel_size=3,

- stride=stride, padding=1, bias=False)

- self.bn2 = nn.BatchNorm2d(planes)

- self.conv3 = nn.Conv2d(planes, self.expansion*planes,

- kernel_size=1, bias=False)

- self.bn3 = nn.BatchNorm2d(self.expansion*planes)

- self.shortcut = nn.Sequential()

- if stride != 1 or in_planes != self.expansion*planes:

- self.shortcut = nn.Sequential(

- nn.Conv2d(in_planes, self.expansion*planes,

- kernel_size=1, stride=stride, bias=False),

- nn.BatchNorm2d(self.expansion*planes)

- )

- def forward(self, x):

- out = F.relu(self.bn1(self.conv1(x)))

- out = F.relu(self.bn2(self.conv2(out)))

- out = self.bn3(self.conv3(out))

- out += self.shortcut(x)

- out = F.relu(out)

- return out

- class ResNet(nn.Module):

- def __init__(self, block, num_blocks, num_classes=10):

- super(ResNet, self).__init__()

- self.in_planes = 64

- self.conv1 = nn.Conv2d(3, 64, kernel_size=3,

- stride=1, padding=1, bias=False)

- self.bn1 = nn.BatchNorm2d(64)

- self.layer1 = self._make_layer(block, 64, num_blocks[0], stride=1)

- self.layer2 = self._make_layer(block, 128, num_blocks[1], stride=2)

- self.layer3 = self._make_layer(block, 256, num_blocks[2], stride=2)

- self.layer4 = self._make_layer(block, 512, num_blocks[3], stride=2)

- self.linear = nn.Linear(512*block.expansion, num_classes)

- def _make_layer(self, block, planes, num_blocks, stride):

- strides = [stride] + [1]*(num_blocks-1)

- layers = []

- for stride in strides:

- layers.append(block(self.in_planes, planes, stride))

- self.in_planes = planes * block.expansion

- return nn.Sequential(*layers)

- def forward(self, x):

- out = F.relu(self.bn1(self.conv1(x)))

- out = self.layer1(out)

- out = self.layer2(out)

- out = self.layer3(out)

- out = self.layer4(out)

- out = F.avg_pool2d(out, 4)

- out = out.view(out.size(0), -1)

- out = self.linear(out)

- return out

- def ResNet18():

- return ResNet(BasicBlock, [2,2,2,2])

- def ResNet34():

- return ResNet(BasicBlock, [3,4,6,3])

- def ResNet50():

- return ResNet(Bottleneck, [3,4,6,3])

- def ResNet101():

- return ResNet(Bottleneck, [3,4,23,3])

- def ResNet152():

- return ResNet(Bottleneck, [3,8,36,3])

编程要求

根据提示,在右侧编辑器补充代码,完成Resnet网络的搭建。

测试说明

平台会对你编写的代码进行测试。

开始你的任务吧,祝你成功!

import torch.nn as nn

import torch.nn.functional as F

# 用于ResNet18和34的残差块,用的是2个3x3的卷积

class BasicBlock(nn.Module):

expansion = 1

def __init__(self, in_planes, planes, stride=1):

super(BasicBlock, self).__init__()

########## Begin ##########

# 定义一个2D卷积层,输入通道数为in_planes,输出通道数为planes,卷积核大小为3x3,步长为stride,填充为1,不使用偏置项

# 为conv1的输出添加一个批归一化层(Batch Normalization),通道数与conv1的输出通道数相同

self.conv1 = nn.Conv2d(in_planes, planes, kernel_size=3,

stride=stride, padding=1, bias=False)

self.bn1 = nn.BatchNorm2d(planes)

# 定义第二个2D卷积层,输入和输出通道数都为planes,卷积核大小为3x3,步长为1,填充为1,不使用偏置项

self.conv2 = nn.Conv2d(planes, planes, kernel_size=3,

stride=1, padding=1, bias=False)

# 为conv2的输出添加一个批归一化层,通道数与conv2的输出通道数相同

self.bn2 = nn.BatchNorm2d(planes)

# 初始化一个空的Sequential模块,用于定义捷径(shortcut)连接,如果输入和输出维度相同,则此模块为空

self.shortcut = nn.Sequential()

# 经过处理后的x要与x的维度相同(尺寸和深度)

# 如果不相同,需要添加卷积+BN来变换为同一维度

if stride != 1 or in_planes != self.expansion*planes:

self.shortcut = nn.Sequential(

nn.Conv2d(in_planes, self.expansion*planes,

kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(self.expansion*planes)

)

########## End ##########

def forward(self, x):

out = F.relu(self.bn1(self.conv1(x)))

out = self.bn2(self.conv2(out))

out += self.shortcut(x)

out = F.relu(out)

return out

# 用于ResNet50,101和152的残差块,用的是1x1+3x3+1x1的卷积

class Bottleneck(nn.Module):

# 前面1x1和3x3卷积的filter个数相等,最后1x1卷积是其expansion倍

expansion = 4

def __init__(self, in_planes, planes, stride=1):

super(Bottleneck, self).__init__()

########## Begin ##########

# 定义一个2D卷积层conv1,输入通道数为in_planes,输出通道数为planes,卷积核大小为1x1,不使用偏置项

# 为conv1的输出添加一个批归一化层bn1,通道数与conv1的输出通道数(即planes)相同

self.conv1 = nn.Conv2d(in_planes, planes, kernel_size=1, bias=False)

self.bn1 = nn.BatchNorm2d(planes)

# 定义一个2D卷积层,卷积核大小为3x3。

self.conv2 = nn.Conv2d(planes, planes, kernel_size=3,

stride=stride, padding=1, bias=False)

# 为conv2的输出添加一个批归一化层bn2。

self.bn2 = nn.BatchNorm2d(planes)

# 定义一个2D卷积层conv3,卷积核大小为1x1。

self.conv3 = nn.Conv2d(planes, self.expansion*planes,

kernel_size=1, bias=False)

# 为conv3的输出添加一个批归一化层bn3

self.bn3 = nn.BatchNorm2d(self.expansion*planes)

# 初始化一个空的Sequential模块shortcut

self.shortcut = nn.Sequential()

# 如果步长stride不等于1或者输入通道数in_planes不等于输出通道数乘以扩张系数self.expansio

if stride != 1 or in_planes != self.expansion*planes:

# 添加一个1x1卷积层

self.shortcut = nn.Sequential(

nn.Conv2d(in_planes, self.expansion*planes,

kernel_size=1, stride=stride, bias=False),

# 为1x1卷积层的输出添加一个批归一化层

nn.BatchNorm2d(self.expansion*planes)

)

########## End ##########

def forward(self, x):

out = F.relu(self.bn1(self.conv1(x)))

out = F.relu(self.bn2(self.conv2(out)))

out = self.bn3(self.conv3(out))

out += self.shortcut(x)

out = F.relu(out)

return out

class ResNet(nn.Module):

def __init__(self, block, num_blocks, num_classes=1000):

super(ResNet, self).__init__()

self.in_planes = 64

self.conv1 = nn.Conv2d(3, 64, kernel_size=3,

stride=1, padding=1, bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.layer1 = self._make_layer(block, 64, num_blocks[0], stride=1)

self.layer2 = self._make_layer(block, 128, num_blocks[1], stride=2)

self.layer3 = self._make_layer(block, 256, num_blocks[2], stride=2)

self.layer4 = self._make_layer(block, 512, num_blocks[3], stride=2)

self.linear = nn.Linear(512*block.expansion, num_classes)

def _make_layer(self, block, planes, num_blocks, stride):

strides = [stride] + [1]*(num_blocks-1)

layers = []

for stride in strides:

layers.append(block(self.in_planes, planes, stride))

self.in_planes = planes * block.expansion

return nn.Sequential(*layers)

def forward(self, x):

out = F.relu(self.bn1(self.conv1(x)))

out = self.layer1(out)

out = self.layer2(out)

out = self.layer3(out)

out = self.layer4(out)

out = F.avg_pool2d(out, 4)

out = out.view(out.size(0), -1)

out = self.linear(out)

return out

def ResNet50():

return ResNet(Bottleneck, [3,4,6,3])

print(ResNet50())