基于 YOLO12 与 OpenCV 的实时点击目标跟踪系统

在计算机视觉领域,目标检测与跟踪是两个核心任务。本文将介绍一个结合 YOLO 目标检测模型与 OpenCV 跟踪算法的实时目标跟踪系统,该系统允许用户通过鼠标交互选择特定目标进行持续跟踪,支持多种跟踪算法切换,适用于视频监控、行为分析等场景。

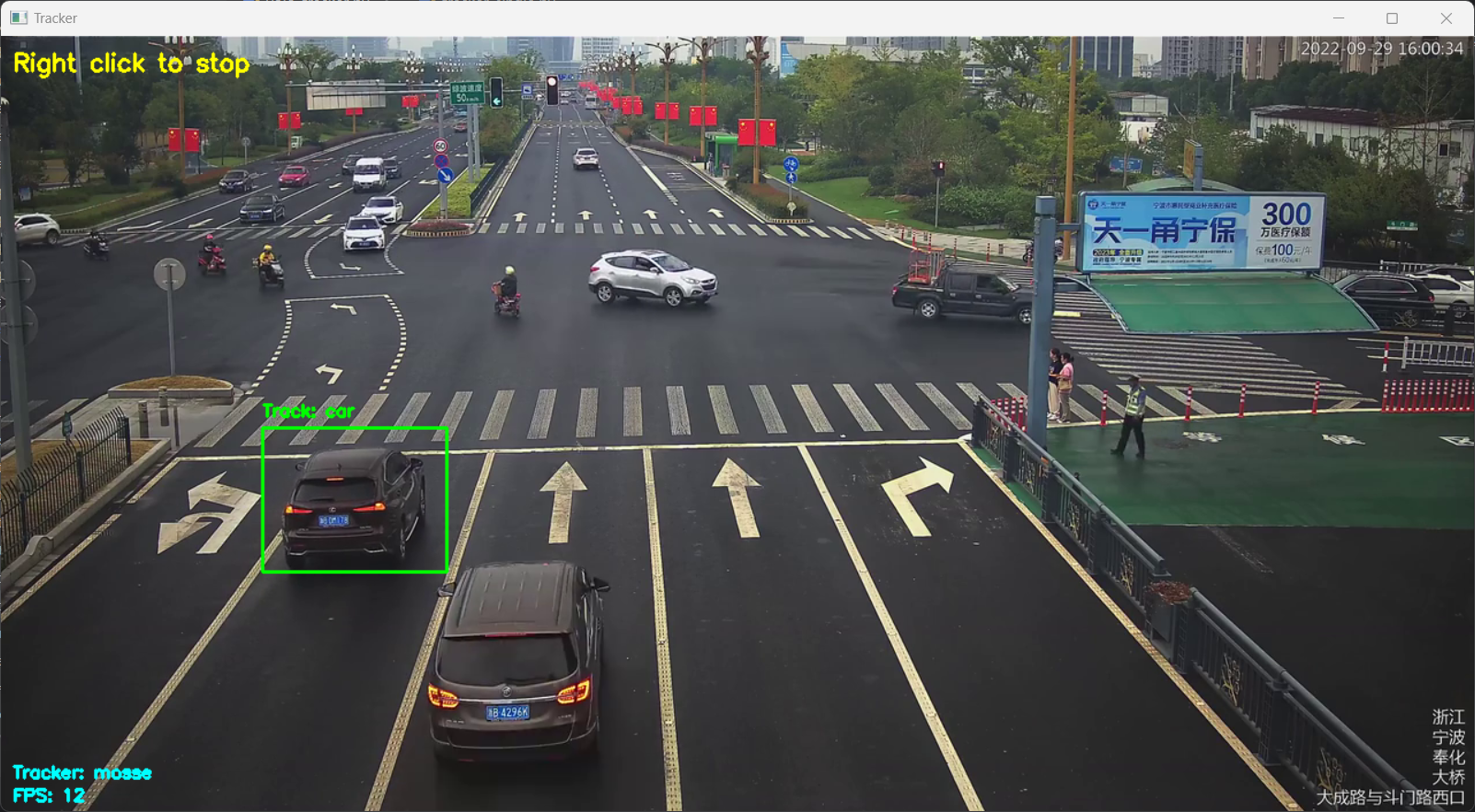

【图像算法 - 13】基于 YOLO12 与 OpenCV 的实时目标点击跟踪系统

系统功能概述

该系统主要实现以下功能:

使用 YOLO 模型对视频帧进行目标检测,识别出画面中的各类物体

支持用户通过左键点击选择特定目标进行跟踪

提供 MOSSE、CSRT、KCF 三种经典跟踪算法供选择

实时显示跟踪状态、目标类别及 FPS 等信息

支持右键点击停止跟踪,回到目标检测模式

技术原理

系统采用 “检测 + 跟踪” 的混合架构:

- 首先利用 YOLO 模型进行目标检测,获取画面中所有目标的边界框和类别信息

- 当用户选择特定目标后,启动选定的跟踪算法对该目标进行持续跟踪

- 跟踪过程中实时更新目标位置,若跟踪失败则提示 “Lost”

- 整个过程通过可视化界面展示,支持用户交互操作

这种架构结合了 YOLO 检测精度高和传统跟踪算法速度快的优点,在保证一定精度的同时兼顾了实时性。

代码解析

核心依赖库

import cv2 # 用于视频处理和跟踪算法

import numpy as np # 用于数值计算

import argparse # 用于命令行参数解析

from ultralytics import YOLO # 用于YOLO目标检测

全局变量与交互设计

定义全局变量存储跟踪状态和用户交互信息:

selected_box = None # 选中的目标边界框

tracking = False # 跟踪状态标志

target_class = None # 目标类别

click_x, click_y = -1, -1 # 鼠标点击坐标

mouse_clicked = False # 鼠标点击标志

debug = False # 调试模式标志

鼠标回调函数处理用户交互:

def mouse_callback(event, x, y, flags, param):

global click_x, click_y, mouse_clicked, tracking

if event == cv2.EVENT_LBUTTONDOWN: # 左键点击选择目标

click_x, click_y = x, y

mouse_clicked = True

if debug:

print(f"Left click at: ({x}, {y})")

elif event == cv2.EVENT_RBUTTONDOWN: # 右键点击停止跟踪

tracking = False

if debug:

print("Tracking stopped")

跟踪器创建

针对不同的跟踪算法,创建对应的跟踪器实例(注意 OpenCV 新版本中跟踪器位于 legacy 模块):

def create_tracker(tracker_type):

"""使用cv2.legacy模块创建跟踪器,兼容新版OpenCV"""

try:

if tracker_type == "mosse":

return cv2.legacy.TrackerMOSSE_create()

elif tracker_type == "csrt":

return cv2.legacy.TrackerCSRT_create()

elif tracker_type == "kcf":

return cv2.legacy.TrackerKCF_create()

else:

print(f"Unsupported tracker: {tracker_type}, using MOSSE")

return cv2.legacy.TrackerMOSSE_create()

except AttributeError as e:

print(f"Failed to create tracker: {e}")

print("Please check OpenCV installation (must include opencv-contrib-python)")

return None

OpenCV 跟踪demo源码

#!/usr/bin/env python

'''

Tracker demo

For usage download models by following links

For GOTURN:

goturn.prototxt and goturn.caffemodel: https://github.com/opencv/opencv_extra/tree/c4219d5eb3105ed8e634278fad312a1a8d2c182d/testdata/tracking

For DaSiamRPN:

network: https://www.dropbox.com/s/rr1lk9355vzolqv/dasiamrpn_model.onnx?dl=0

kernel_r1: https://www.dropbox.com/s/999cqx5zrfi7w4p/dasiamrpn_kernel_r1.onnx?dl=0

kernel_cls1: https://www.dropbox.com/s/qvmtszx5h339a0w/dasiamrpn_kernel_cls1.onnx?dl=0

For NanoTrack:

nanotrack_backbone: https://github.com/HonglinChu/SiamTrackers/blob/master/NanoTrack/models/nanotrackv2/nanotrack_backbone_sim.onnx

nanotrack_headneck: https://github.com/HonglinChu/SiamTrackers/blob/master/NanoTrack/models/nanotrackv2/nanotrack_head_sim.onnx

USAGE:

tracker.py [-h] [--input INPUT_VIDEO]

[--tracker_algo TRACKER_ALGO (mil, goturn, dasiamrpn, nanotrack, vittrack)]

[--goturn GOTURN_PROTOTXT]

[--goturn_model GOTURN_MODEL]

[--dasiamrpn_net DASIAMRPN_NET]

[--dasiamrpn_kernel_r1 DASIAMRPN_KERNEL_R1]

[--dasiamrpn_kernel_cls1 DASIAMRPN_KERNEL_CLS1]

[--nanotrack_backbone NANOTRACK_BACKBONE]

[--nanotrack_headneck NANOTRACK_TARGET]

[--vittrack_net VITTRACK_MODEL]

[--vittrack_net VITTRACK_MODEL]

[--tracking_score_threshold TRACKING SCORE THRESHOLD FOR ONLY VITTRACK]

[--backend CHOOSE ONE OF COMPUTATION BACKEND]

[--target CHOOSE ONE OF COMPUTATION TARGET]

'''

# Python 2/3 compatibility

from __future__ import print_function

import sys

import numpy as np

import cv2 as cv

import argparse

from video import create_capture, presets

backends = (cv.dnn.DNN_BACKEND_DEFAULT, cv.dnn.DNN_BACKEND_HALIDE, cv.dnn.DNN_BACKEND_INFERENCE_ENGINE, cv.dnn.DNN_BACKEND_OPENCV,

cv.dnn.DNN_BACKEND_VKCOM, cv.dnn.DNN_BACKEND_CUDA)

targets = (cv.dnn.DNN_TARGET_CPU, cv.dnn.DNN_TARGET_OPENCL, cv.dnn.DNN_TARGET_OPENCL_FP16, cv.dnn.DNN_TARGET_MYRIAD,

cv.dnn.DNN_TARGET_VULKAN, cv.dnn.DNN_TARGET_CUDA, cv.dnn.DNN_TARGET_CUDA_FP16)

class App(object):

def __init__(self, args):

self.args = args

self.trackerAlgorithm = args.tracker_algo

self.tracker = self.createTracker()

def createTracker(self):

if self.trackerAlgorithm == 'mil':

tracker = cv.TrackerMIL_create()

elif self.trackerAlgorithm == 'goturn':

params = cv.TrackerGOTURN_Params()

params.modelTxt = self.args.goturn

params.modelBin = self.args.goturn_model

tracker = cv.TrackerGOTURN_create(params)

elif self.trackerAlgorithm == 'dasiamrpn':

params = cv.TrackerDaSiamRPN_Params()

params.model = self.args.dasiamrpn_net

params.kernel_cls1 = self.args.dasiamrpn_kernel_cls1

params.kernel_r1 = self.args.dasiamrpn_kernel_r1

params.backend = args.backend

params.target = args.target

tracker = cv.TrackerDaSiamRPN_create(params)

elif self.trackerAlgorithm == 'nanotrack':

params = cv.TrackerNano_Params()

params.backbone = args.nanotrack_backbone

params.neckhead = args.nanotrack_headneck

params.backend = args.backend

params.target = args.target

tracker = cv.TrackerNano_create(params)

elif self.trackerAlgorithm == 'vittrack':

params = cv.TrackerVit_Params()

params.net = args.vittrack_net

params.tracking_score_threshold = args.tracking_score_threshold

params.backend = args.backend

params.target = args.target

tracker = cv.TrackerVit_create(params)

else:

sys.exit("Tracker {} is not recognized. Please use one of three available: mil, goturn, dasiamrpn, nanotrack.".format(self.trackerAlgorithm))

return tracker

def initializeTracker(self, image):

while True:

print('==> Select object ROI for tracker ...')

bbox = cv.selectROI('tracking', image)

print('ROI: {}'.format(bbox))

if bbox[2] <= 0 or bbox[3] <= 0:

sys.exit("ROI selection cancelled. Exiting...")

try:

self.tracker.init(image, bbox)

except Exception as e:

print('Unable to initialize tracker with requested bounding box. Is there any object?')

print(e)

print('Try again ...')

continue

return

def run(self):

videoPath = self.args.input

print('Using video: {}'.format(videoPath))

camera = create_capture(cv.samples.findFileOrKeep(videoPath), presets['cube'])

if not camera.isOpened():

sys.exit("Can't open video stream: {}".format(videoPath))

ok, image = camera.read()

if not ok:

sys.exit("Can't read first frame")

assert image is not None

cv.namedWindow('tracking')

self.initializeTracker(image)

print("==> Tracking is started. Press 'SPACE' to re-initialize tracker or 'ESC' for exit...")

while camera.isOpened():

ok, image = camera.read()

if not ok:

print("Can't read frame")

break

ok, newbox = self.tracker.update(image)

#print(ok, newbox)

if ok:

cv.rectangle(image, newbox, (200,0,0))

cv.imshow("tracking", image)

k = cv.waitKey(1)

if k == 32: # SPACE

self.initializeTracker(image)

if k == 27: # ESC

break

print('Done')

if __name__ == '__main__':

print(__doc__)

parser = argparse.ArgumentParser(description="Run tracker")

parser.add_argument("--input", type=str, default="vtest.avi", help="Path to video source")

parser.add_argument("--tracker_algo", type=str, default="nanotrack", help="One of available tracking algorithms: mil, goturn, dasiamrpn, nanotrack, vittrack")

parser.add_argument("--goturn", type=str, default="goturn.prototxt", help="Path to GOTURN architecture")

parser.add_argument("--goturn_model", type=str, default="goturn.caffemodel", help="Path to GOTERN model")

parser.add_argument("--dasiamrpn_net", type=str, default="dasiamrpn_model.onnx", help="Path to onnx model of DaSiamRPN net")

parser.add_argument("--dasiamrpn_kernel_r1", type=str, default="dasiamrpn_kernel_r1.onnx", help="Path to onnx model of DaSiamRPN kernel_r1")

parser.add_argument("--dasiamrpn_kernel_cls1", type=str, default="dasiamrpn_kernel_cls1.onnx", help="Path to onnx model of DaSiamRPN kernel_cls1")

parser.add_argument("--nanotrack_backbone", type=str, default="nanotrack_backbone_sim.onnx", help="Path to onnx model of NanoTrack backBone")

parser.add_argument("--nanotrack_headneck", type=str, default="nanotrack_head_sim.onnx", help="Path to onnx model of NanoTrack headNeck")

parser.add_argument("--vittrack_net", type=str, default="vitTracker.onnx", help="Path to onnx model of vittrack")

parser.add_argument('--tracking_score_threshold', type=float, help="Tracking score threshold. If a bbox of score >= 0.3, it is considered as found ")

parser.add_argument('--backend', choices=backends, default=cv.dnn.DNN_BACKEND_DEFAULT, type=int,

help="Choose one of computation backends: "

"%d: automatically (by default), "

"%d: Halide language (http://halide-lang.org/), "

"%d: Intel's Deep Learning Inference Engine (https://software.intel.com/openvino-toolkit), "

"%d: OpenCV implementation, "

"%d: VKCOM, "

"%d: CUDA"% backends)

parser.add_argument("--target", choices=targets, default=cv.dnn.DNN_TARGET_CPU, type=int,

help="Choose one of target computation devices: "

'%d: CPU target (by default), '

'%d: OpenCL, '

'%d: OpenCL fp16 (half-float precision), '

'%d: VPU, '

'%d: VULKAN, '

'%d: CUDA, '

'%d: CUDA fp16 (half-float preprocess)'% targets)

args = parser.parse_args()

App(args).run()

cv.destroyAllWindows()

目标检测与处理

使用 YOLO 模型进行目标检测,并将结果转换为便于处理的格式:

def detect_objects(frame, model, confidence):

results = model(frame, conf=confidence)

boxes = [] # 存储边界框 (x, y, w, h)

class_names = [] # 存储目标类别

for result in results:

for box in result.boxes:

x1, y1, x2, y2 = box.xyxy[0].tolist()

boxes.append((int(x1), int(y1), int(x2 - x1), int(y2 - y1)))

class_names.append(model.names[int(box.cls[0])])

return boxes, class_names

判断鼠标点击是否在某个目标框内:

def point_in_box(x, y, box):

bx, by, bw, bh = box

return bx <= x <= bx + bw and by <= y <= by + bh

主程序逻辑

主函数协调整个系统的工作流程:

def main():

global selected_box, tracking, target_class, mouse_clicked, debug

args = parse_args()

debug = args.debug

# 验证跟踪器是否可用

test_tracker = create_tracker(args.tracker)

if test_tracker is None:

return

del test_tracker

# 加载YOLO模型

try:

model = YOLO(args.model)

except Exception as e:

print(f"Failed to load YOLO model: {e}")

return

# 打开视频源

cap = cv2.VideoCapture(args.video)

if not cap.isOpened():

print(f"Cannot open video source: {args.video}")

return

# 创建窗口和鼠标回调

window_name = "Tracker"

cv2.namedWindow(window_name)

cv2.setMouseCallback(window_name, mouse_callback)

tracker = None

font = cv2.FONT_HERSHEY_SIMPLEX

while True:

ret, frame = cap.read()

if not ret:

print("End of video")

break

# 检测目标

boxes, class_names = detect_objects(frame, model, args.confidence)

# 处理点击选择目标

if mouse_clicked and not tracking:

for i, (box, cls) in enumerate(zip(boxes, class_names)):

if point_in_box(click_x, click_y, box):

selected_box = box

target_class = cls

tracker = create_tracker(args.tracker)

if tracker:

tracker.init(frame, selected_box)

tracking = True

print(f"Tracking {target_class} with {args.tracker}")

break

mouse_clicked = False

# 跟踪逻辑

if tracking and tracker:

ok, bbox = tracker.update(frame)

if ok:

x, y, w, h = [int(v) for v in bbox]

cv2.rectangle(frame, (x, y), (x + w, y + h), (0, 255, 0), 2)

cv2.putText(frame, f"Track: {target_class}", (x, y - 10),

font, 0.5, (0, 255, 0), 2)

else:

cv2.putText(frame, "Lost", (10, 30), font, 0.7, (0, 0, 255), 2)

tracking = False

# 未跟踪时显示所有目标

if not tracking:

for box, cls in zip(boxes, class_names):

x, y, w, h = box

cv2.rectangle(frame, (x, y), (x + w, y + h), (255, 0, 0), 2)

cv2.putText(frame, cls, (x, y - 10), font, 0.5, (255, 0, 0), 2)

# 显示提示信息

hint = "Right click to stop" if tracking else "Left click to select"

cv2.putText(frame, hint, (10, 30), font, 0.7, (0, 255, 255), 2)

cv2.putText(frame, f"Tracker: {args.tracker}", (10, frame.shape[0] - 30),

font, 0.5, (255, 255, 0), 2)

cv2.putText(frame, f"FPS: {int(cap.get(cv2.CAP_PROP_FPS))}",

(10, frame.shape[0] - 10), font, 0.5, (255, 255, 0), 2)

# 显示窗口

cv2.imshow(window_name, frame)

# 退出条件

if cv2.waitKey(1) & 0xFF == ord('q'):

break

cap.release()

cv2.destroyAllWindows()

使用方法

环境配置

首先安装必要的依赖库:

pip install opencv-python opencv-contrib-python ultralytics numpy

运行参数

程序支持以下命令行参数:

--video:视频源路径,默认为 “xxxxx.mp4”,使用 “0” 可调用摄像头--confidence:目标检测置信度阈值,默认 0.5--model:YOLO 模型路径,默认 “yolo12n.pt”(会自动下载)--tracker:跟踪算法选择,可选 “mosse”、“csrt”、“kcf”,默认 “mosse”--debug:启用调试模式,打印额外信息

操作指南

- 运行程序后,系统默认处于目标检测模式,所有检测到的目标用蓝色框标记

- 左键点击某个目标框,系统将开始用绿色框跟踪该目标

- 右键点击可停止跟踪,回到目标检测模式

- 按 “q” 键退出程序

算法特性对比

三种跟踪算法各有特点:

- MOSSE:速度最快,适合实时性要求高的场景,但精度相对较低

- CSRT:精度最高,但速度较慢,适合对精度要求高的场景

- KCF:介于前两者之间,平衡了速度和精度

根据实际应用场景选择合适的跟踪算法可以获得更好的效果。

总结与扩展

本文介绍的目标跟踪系统结合了 YOLO 的强检测能力和传统跟踪算法的高效性,通过简单的交互实现了灵活的目标跟踪功能。该系统可进一步扩展,例如:

- 增加多目标跟踪功能

- 结合 ReID(重识别)技术解决目标遮挡问题

- 加入目标行为分析模块

- 优化跟踪失败后的自动重新检测机制

通过这个系统,不仅可以快速实现实用的目标跟踪应用,也有助于理解目标检测与跟踪相结合的技术路线,为更复杂的计算机视觉系统开发奠定基础。